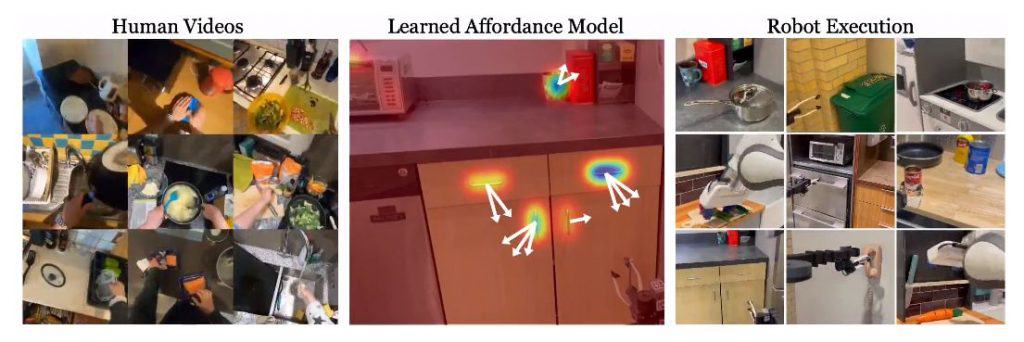

Meta AI unveiled a new algorithm that enables robots to learn and replicate human actions by watching YouTube videos. In a recent paper entitled “Affordances from Human Videos as a Versatile Representation for Robotics,” the authors explore how videos of human interactions can be leveraged to train robots to perform complex tasks.

This research aims to bridge the gap between static datasets and real-world robot applications. While previous models have shown success on static datasets, applying these models directly to robots has remained a challenge. The researchers propose training a visual affordance model using internet videos of human behavior could be a solution. This model estimates where and how a human is likely to interact in a scene, providing valuable information for robots.

The concept of “affordances” is central to this approach. Affordances refer to the potential actions or interactions an object or environment offers. By understanding affordances through human videos, the robot gains a versatile representation that enables it to perform various complex tasks. The researchers integrate their affordance model with four different robot learning paradigms: offline imitation learning, exploration, goal-conditioned learning, and action parameterization for reinforcement learning.

To extract affordances, the researchers utilize large-scale human video datasets like Ego4D and Epic Kitchens. They employ off-the-shelf hand-object interaction detectors to identify the contact region and track the wrist’s trajectory after contact. However, an important challenge arises when the human is still present in the scene, causing a distribution shift. To address this, the researchers use available camera information to project the contact points and post-contact trajectory to a human-agnostic frame, which serves as input to their model.

Previously, robots were capable of mimicking actions, but their abilities were limited to replicating specific environments. With the latest algorithm, researchers have made significant progress in “generalizing” robot actions. Robots can now apply their acquired knowledge in new and unfamiliar environments. This achievement aligns with the vision of achieving Artificial General Intelligence (AGI) as advocated by AI researcher Jan LeCun.

Meta AI is committed to advancing the field of computer vision and is planning to share its project’s code and dataset. This will enable other researchers and developers to further explore and build upon this technology. With increased access to the code and dataset, the development of self-learning robots capable of acquiring new skills from YouTube videos will continue to progress.

By leveraging the vast amount of online instructional videos, robots can become more versatile and adaptable in various environments.

Read more about AI:

Read More: mpost.io

Compound

Compound  Wormhole

Wormhole  Sun Token

Sun Token  Staked HYPE

Staked HYPE  Movement

Movement  Popcat

Popcat  Coinbase Wrapped Staked ETH

Coinbase Wrapped Staked ETH  Ether.fi Staked BTC

Ether.fi Staked BTC  Mog Coin

Mog Coin  Amp

Amp  Gnosis

Gnosis  Akash Network

Akash Network  Livepeer

Livepeer  Swell Ethereum

Swell Ethereum  Polygon Bridged WBTC (Polygon POS)

Polygon Bridged WBTC (Polygon POS)  Trust Wallet

Trust Wallet  JUST

JUST  Polygon

Polygon  Olympus

Olympus  Terra Luna Classic

Terra Luna Classic  Polygon PoS Bridged WETH (Polygon POS)

Polygon PoS Bridged WETH (Polygon POS)  Legacy Frax Dollar

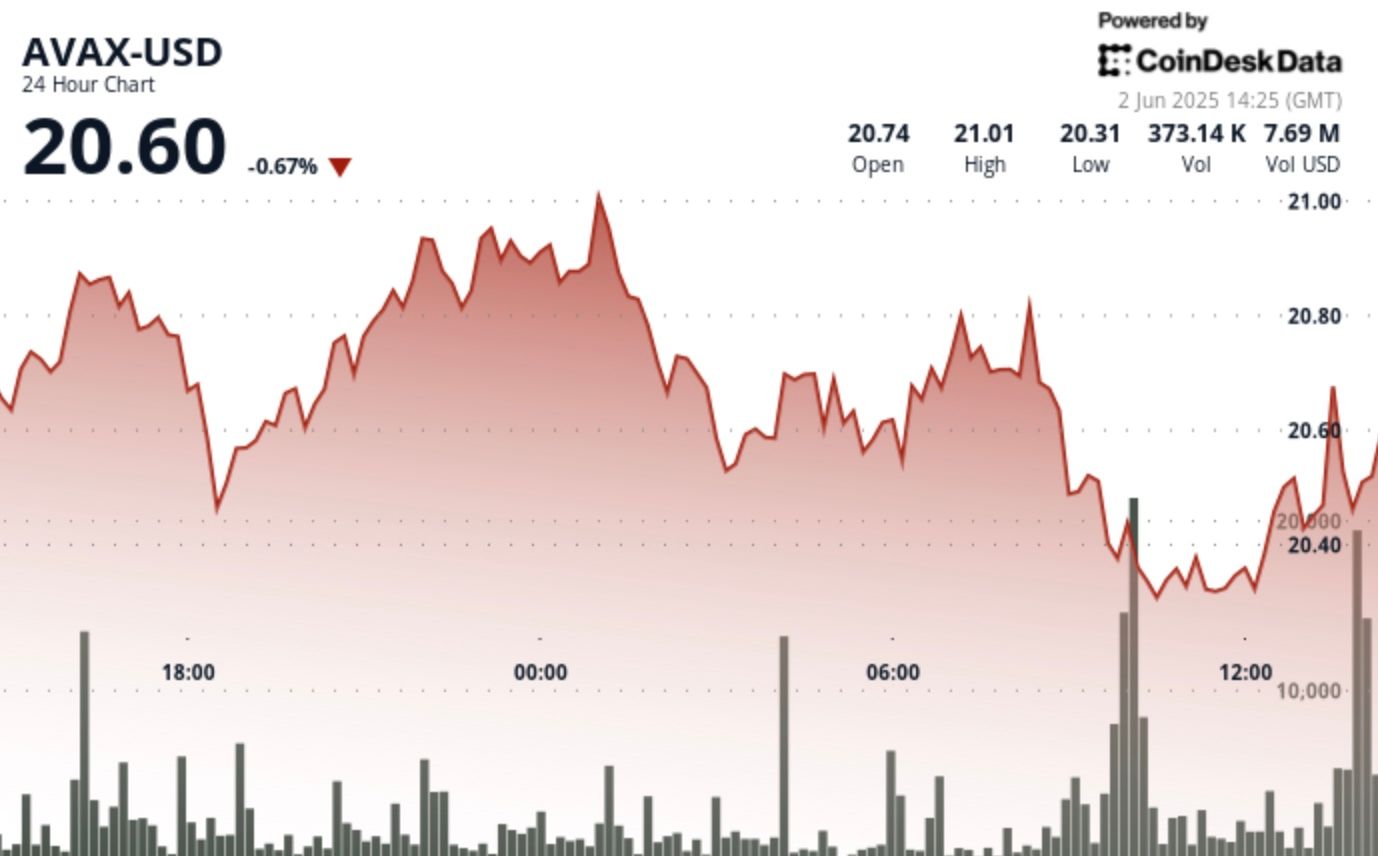

Legacy Frax Dollar  Wrapped AVAX

Wrapped AVAX  Binance-Peg BUSD

Binance-Peg BUSD  Plume

Plume  Global Dollar

Global Dollar  Axelar

Axelar  Ripple USD

Ripple USD  MANTRA

MANTRA  SuperVerse

SuperVerse  Safe

Safe  Bybit Staked SOL

Bybit Staked SOL  Turbo

Turbo  1inch

1inch  Berachain

Berachain  Creditcoin

Creditcoin  Cheems Token

Cheems Token  cat in a dogs world

cat in a dogs world  Aave USDC (Sonic)

Aave USDC (Sonic)  Quorium

Quorium  BTSE Token

BTSE Token  Dash

Dash  aBTC

aBTC  Kusama

Kusama  cWBTC

cWBTC  Decred

Decred  ai16z

ai16z